McKinsey puts it plainly: “Poor management of tech debt hamstrings companies’ ability to compete.” For enterprise technology leaders, that statement lands differently than it does for a startup. “Legacy” rarely means “obsolete” — it means critical. These systems — mainframes processing millions of daily transactions, monolithic ERPs governing supply chains, decade-old .NET applications — are the operational backbone of the business. Replacing them isn’t optional, and it isn’t simple.

The numbers make the cost of inaction concrete. According to McKinsey research, technical debt now accounts for 20–40% of organizations’ total technology estate value — and CIOs estimate that 10–20% of every new product dollar is quietly diverted just to manage debt they’ve already accumulated. Meanwhile, cloud-native competitors carry none of that weight. They iterate faster, scale cheaper, and integrate AI seamlessly. The challenge isn’t keeping the lights on. It’s transforming the power grid without interrupting the flow of electricity.

The Cost of Doing Nothing

A system that functions but blocks AI integration, requires rare skill sets, or demands days of manual testing before each release is no longer working for the business. It’s an anchor. And unlike most business problems, legacy debt doesn’t hold steady while you deliberate — three clocks are ticking simultaneously.

The Financial Clock. According to a 2024 Gartner survey, companies spend over 70% of their IT budget on “run the business” activities — leaving less than 30% for growth and innovation. Modernization flips this ratio. That imbalance compounds every year: McKinsey estimates technical debt now accounts for 20–40% of organizations’ total technology estate value, and companies with fragmented legacy systems are 30% more likely to experience AI implementation delays. In an era where AI readiness is a competitive differentiator, this isn’t just a technology problem — it’s a strategic one. Every quarter spent firefighting is a quarter your cloud-native competitors spent building.

The Security Clock. Legacy systems run on end-of-life infrastructure with unpatched vulnerabilities. Older systems — often without modern encryption, API security, or vendor support — are the easiest entry points for attackers. IBM’s research puts the average cost of a data breach at $4.45 million, a figure that has risen 15% in three years. And in financial services and healthcare, that exposure isn’t just financial. It’s a compliance violation waiting to happen, and regulators are not known for patience.

The Talent Clock. The workforce that built and knows these systems is retiring. Industry estimates suggest that nearly all RPG talent will have exited the workforce by 2030, and fewer than 2,000 COBOL programmers graduated worldwide in 2024. At the same time, top engineers expect modern toolchains — and will choose employers who offer them. Modernizing the stack is as much a talent acquisition and retention strategy as it is a technology one. Wait long enough, and the institutional knowledge walking out the door becomes the hardest problem to solve.

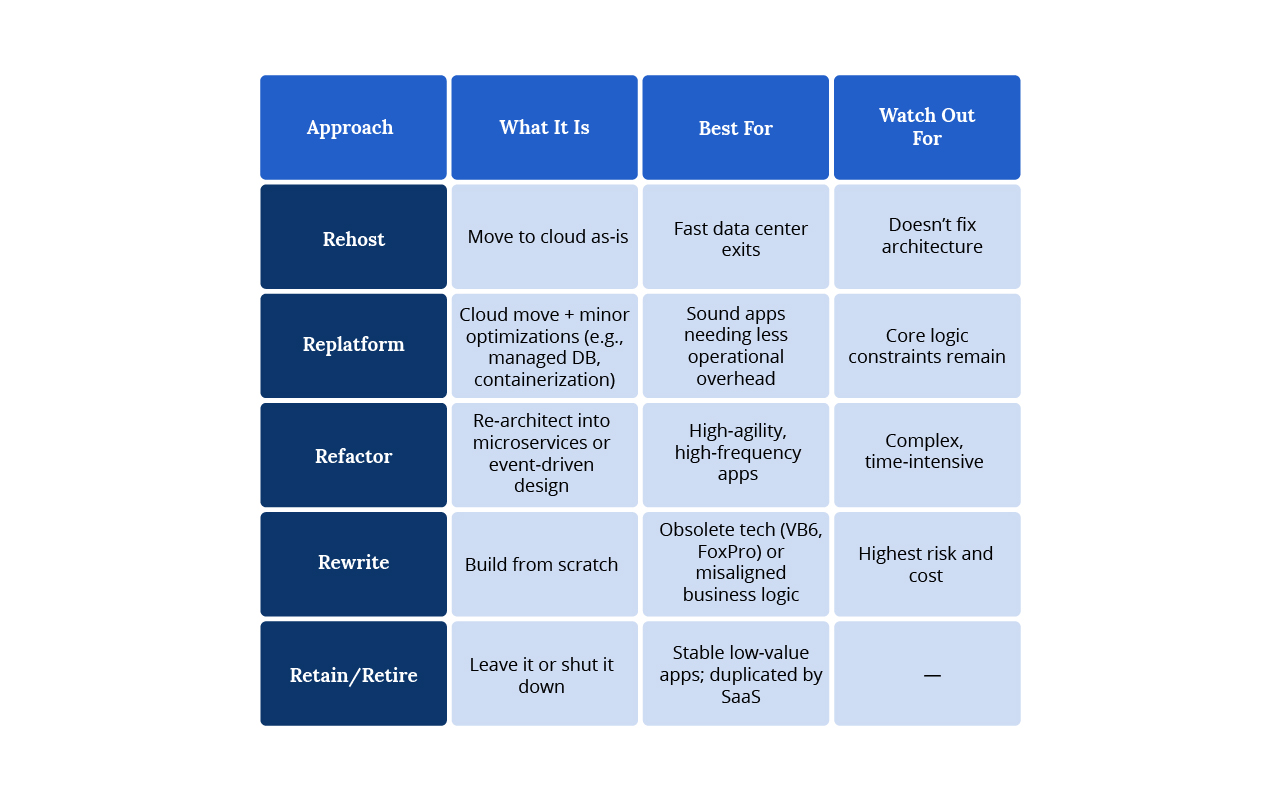

The Modernization Spectrum

No single approach fits every application. The right strategy depends on business value and technical condition.

The Strategic Pattern: Strangler Fig

Choosing the right approach is only half the equation — how you execute is equally critical. “Big Bang” rewrites — shut down Friday, launch Monday — are a recipe for disaster. Instead, the Strangler Fig Pattern replaces functionality incrementally while the legacy system continues to run.

- Identify a slice — pick one domain (e.g., User Profiles)

- Build the new service — as a modern microservice

- Route traffic — via API gateway; legacy handles everything else

- Repeat — until the old system is an empty shell, safely decommissioned

Case Study — Luxury Travel

A global luxury hospitality company had constrained its internal development teams for years under the weight of a decade-old .NET codebase. The system limited feature velocity, degraded user experience, and carried mounting performance costs.

Using the Strangler Fig pattern and event-driven design, the engineering team migrated incrementally from a Windows-tied .NET architecture to .NET 6 on Linux, with Azure Functions and Azure Cosmos DB handling scalable, event-triggered services. A new CI/CD pipeline replaced a manual testing and release process.

The result: twice the development velocity, reduced operational costs through serverless migration, and a transformation from manual to automated QA at scale. As their VP of Technology noted, the engagement worked because it was treated as a strategic partnership, not a vendor transaction.

Breaking the Monolith: Microservices

With the right pattern in place, architecture decisions come next. In a monolith, scaling one function means scaling everything. A memory leak in Reporting can crash Billing. Microservices solve this.

- Independent scalability — scale only the Checkout service on Black Friday

- Technology flexibility — Python for the Recommendation Engine, Java for Inventory

- Fault isolation — one service fails; the rest keep running

Case Study — Global Computing Provider

A global enterprise delivering electronics and computing solutions was operating on a monolithic legacy application that constrained agility, scalability, and cost efficiency.

The team decomposed the system into 30 independently deployable microservices using an Infrastructure on Demand pattern, migrated to a Kubernetes environment, and automated provisioning workflows with Ansible. Infrastructure-agnostic code reduced cloud vendor lock-in, and localization support was added for four languages.

The result: 63% cost reduction through Infrastructure on Demand and streamlined hosting, standardized deployments across all environments, and Kubernetes startup times under one hour.

Modernizing How You Ship: DevOps & Quality Engineering

Modern code deployed via manual release processes is still a legacy workflow. Microservices and new architecture only deliver their value when paired with modern delivery practices.

CI/CD pipelines eliminate “integration hell” — where monthly merges create massive conflicts — replacing them with daily or hourly automated integration and deployment. Intelligent Quality Engineering replaces slow, manual QA with AI-assisted automation: automated API/UI testing on every commit, synthetic test data for compliance, and AI-generated unit tests.

Case Study — Medical Affairs Platform

A global medical affairs software company needed to accelerate delivery velocity on a next-generation SaaS platform without compromising quality or compliance.

The engagement embedded a dedicated AI-enabled engineering pod directly alongside the client’s team, transforming the SDLC with AI across engineering, QA, and product workflows. AI-assisted coding, refactoring, and PR review workflows drove a 40–50% acceleration in development cycles. Sprint velocity increased by 28% — delivering more story points while simultaneously reducing bug counts.

On the QA side, AI-generated test cases and structured defect reporting cut manual QA effort by 35–45%. Perhaps most tellingly, backlog preparation time dropped from five days to one hour through AI-driven epic-to-story conversion.

The impact extended beyond speed: API design consistency improved across the platform, and onboarding time for new engineers was dramatically reduced.

AI-Accelerated Modernization

These results are increasingly powered not just by skilled engineers and sound architecture, but by AI embedded directly into how software is built and delivered.

The biggest barrier to legacy application modernization is often missing documentation. Original developers leave; the why behind critical business logic disappears into thousands of lines of undocumented code. Our Gorilla Logic Construct™ framework — a proprietary system of reusable assets, reference implementations, and a three-tier adoption model — can address this.

Construct™ organizes AI implementation into three progressive levels:

- Tasks: Individual automated actions that make daily work faster — PR review automation, commit generation, meeting transcript summaries. Domain-agnostic and immediately deployable.

- Workflows: Connected tasks working in sequence, coordinated by humans, building institutional knowledge. The System Documentation Workflow, for example, chains code analysis, documentation generation, verification, and formatting to produce comprehensive technical documentation for legacy codebases — in hours, not weeks.

- Orchestration: The most sophisticated tier, where AI agents handle entire processes autonomously with human oversight. Successful orchestration requires deep domain knowledge and thorough discovery; a generic approach will not work out-of-the-box in complex enterprise environments.

Construct™ also includes a library of reusable assets — battle-tested prompts, pre-built agents, standardized templates, and reference architectures derived from real client engagements — so each new project starts closer to the finish line.

Why Legacy Application Modernization Projects Fail

Before examining what good looks like, it’s worth naming the real reasons modernization efforts fall short — because many do. Industry data suggests that as many as 79% of application modernization projects miss timelines or budget targets. The patterns are consistent.

Scope without sequencing. Teams attempt to modernize everything at once, lose momentum, and stall. The fix: start with a bounded, high-value domain and establish a repeatable pattern before scaling.

Underestimating data migration complexity. Code is transient; data is permanent. Organizations that treat data migration as an afterthought discover mid-project that moving decades of production data cleanly — without corruption or downtime — is the hardest problem in the engagement.

Organizational resistance. Technical transformation outpaces cultural change. Engineers familiar with legacy systems resist new toolchains. Business stakeholders lose confidence when early milestones slip. Modernization requires change management alongside architecture decisions.

Inadequate rollback planning. Every deployment needs an automated rollback capability. If new code fails, the system must revert instantly. Teams that skip this discipline pay for it in outages.

Modernizing the wrong things first. McKinsey has observed that companies often spend heavily modernizing applications that aren’t significant contributors to technical debt. A proper assessment phase — with portfolio inventory, dependency mapping, and business value scoring — is not optional.

The Modernization Imperative

Enterprise technology leaders are right to be cautious about modernization. The history of failed projects is long, and the risks are real. But the calculus has shifted. With AI now embedded in delivery workflows, with patterns like Strangler Fig removing the need for risky big-bang rewrites, and with frameworks like Construct™ codifying what good looks like across engagements, the question is no longer whether modernization is achievable — it’s whether your organization can afford another year of compounding debt while competitors who already modernized pull further ahead.

The path from assessment to optimization is well-defined — inventory the portfolio, select the right approach per application, execute incrementally, and retire what no longer serves the business. The real variable isn’t the roadmap. It’s the decision to start.

The companies that move deliberately, with the right architecture and the right partner, will inherit the market. The ones that wait will spend the next decade paying interest on a debt they chose not to address.

The three clocks don’t stop. Let’s talk about where to start — and how to build momentum that lasts.

Related Resources

From Undocumented to Understood: AI-Powered Legacy Analysis

How One Marketplace Achieved 20x Payment Growth Through Modernization

How a Luxury Travel Brand Broke Free From a Decade-Old Codebase