By Erick Leiva Lopez, AI Delivery Manager at Gorilla Logic

Success in AI-Enabled Engineering is rarely a tooling story, it’s an operating model story. If you accelerate code creation inside an unchanged SDLC, you often just push work faster into the same bottlenecks: unclear requirements, risky deployments, slow approvals, and manual testing.

At Gorilla Logic, we approach AI with product thinking: we start with the problem, define success with KPIs, engineer workflows with explicit inputs/outputs and guardrails, and then scale reusable assets that compound across teams.

- Faster Code ≠ Faster Delivery

- The Gorilla Logic Enablement Playbook: Learn → Practice → Scale

- AI-Enabled Engineering as a Product Loop

- Where AI Productivity Actually Comes From: Context + Workflow, Not just Code Gen

- Gorilla Logic Catalog: Targeted Reusable AI Assets Across the SDLC

- The Executive's Framework for Quantifying AI Impact, or Beyond Vanity Metrics: A Scorecard for Real AI-Enabled Engineering ROI

- The Next Level Engineered Workflows (Gorilla Logic Construct™)

- A Gorilla Logic Success Story: Time-to-Value Cut From 7 Days to 4 Days by Engineering AI Across the SDLC

- Gorilla Logic Delivery Effectiveness in ROI Terms

- Conclusion: AI That Executives Can Trust Is Engineered

- Related Content

Faster Code ≠ Faster Delivery

IDE (integrated development environment) copilots can speed up individual coding tasks, but delivery performance is a system outcome. Research shows both strong gains and sobering limits depending on context:

- Controlled experiments have shown major speedups for specific coding tasks (e.g., GitHub Copilot users completing a task ~55.8% faster).

- In a randomized controlled trial with experienced open-source developers working in their own repositories, AI tool usage made them ~19% slower on average.

- Surveys show developers save time with AI, yet organizational friction (finding information, context switching, unclear direction) can consume those gains.

The executive takeaway: avoid measuring AI impact via perception alone. Track both perceived productivity (& dev confidence) and system metrics (flow, reliability, quality).

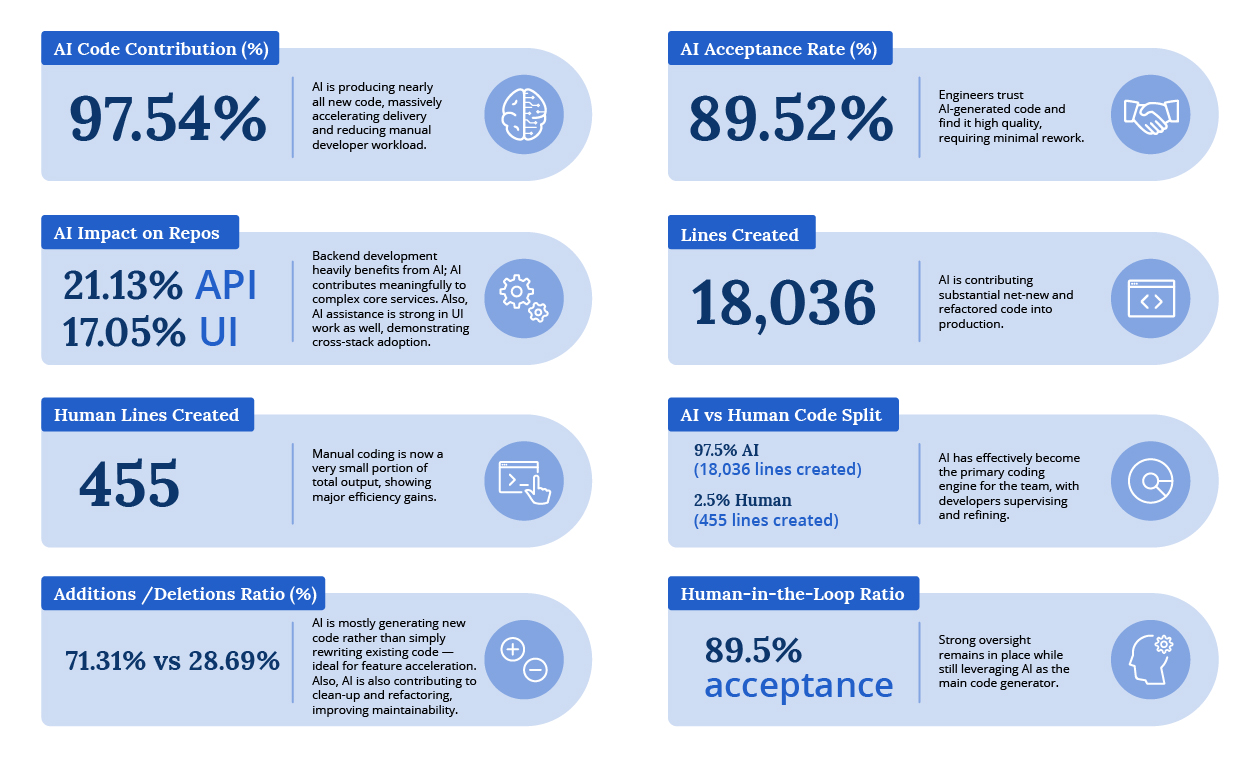

Gorilla Logic Cursor Metrics example from one GL Pod.

The Gorilla Logic Enablement Playbook: Learn → Practice → Scale

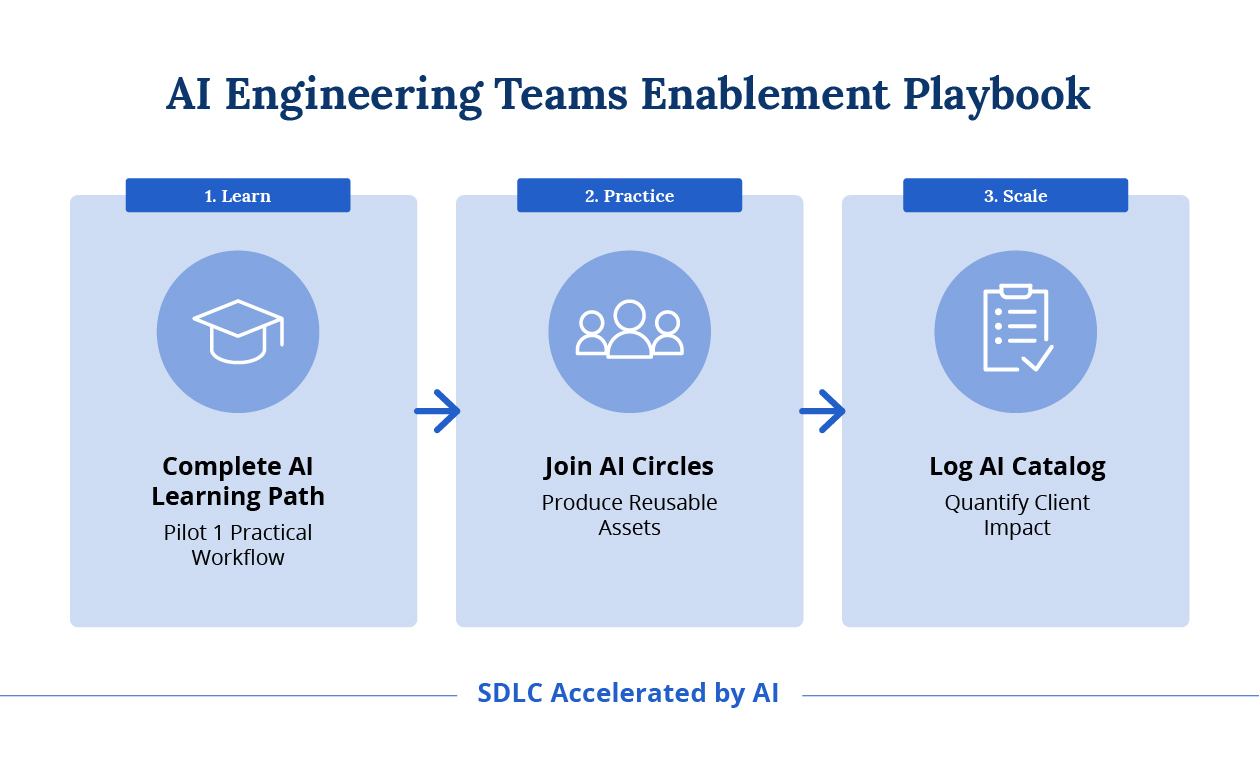

Sustainable AI adoption requires a learning flywheel, not an announcement. Our enablement model progresses in three steps:

Gorilla Logic AI Engineering Teams Enablement Playbook.

- GL Learning Path: specific trainings to build shared literacy in tools, prompting patterns, risks, and quality expectations.

- GL Circles: providing the time & space to people to explore and apply AI to real delivery work, sharing patterns and anti-patterns in a safe way

- GL Catalog: targeted SDLC improvements that are reusable, governed, and measurable.

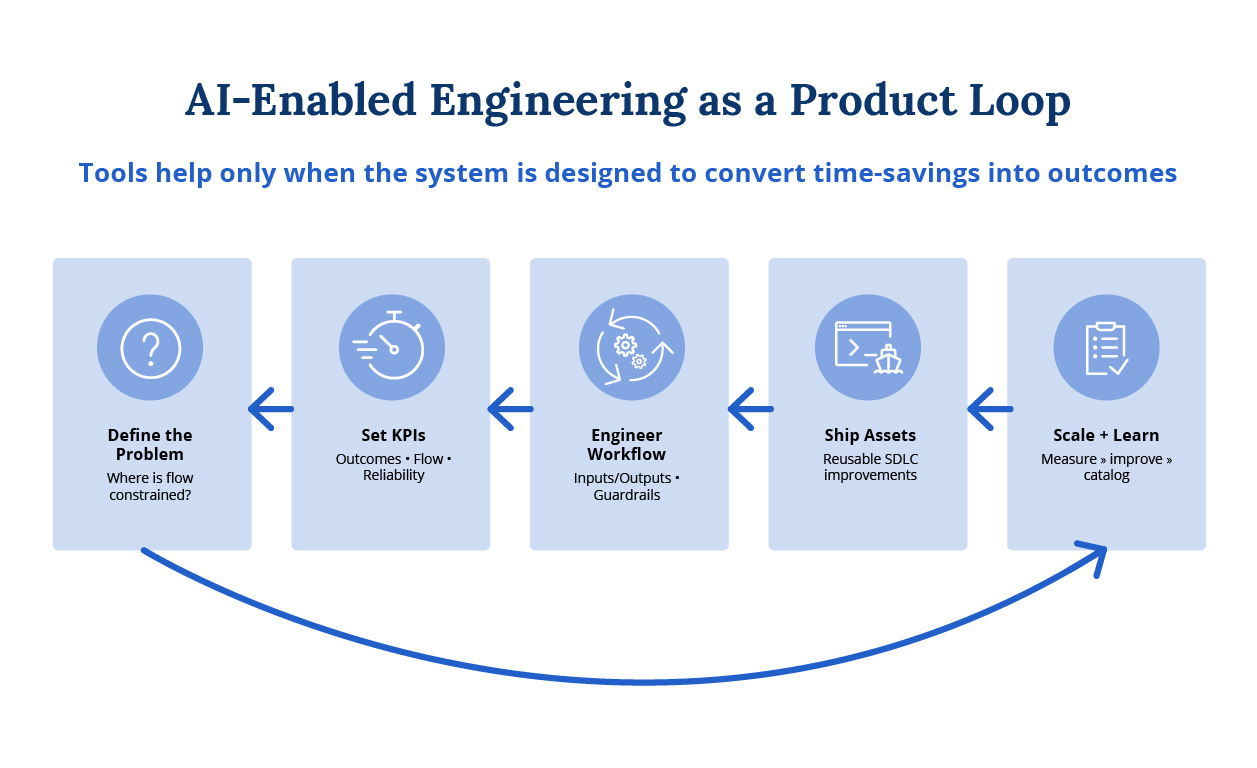

AI-Enabled Engineering as a Product Loop

High performing AI programs look like product management applied to the SDLC. They start with a bottleneck, define measurable success, engineer a workflow, ship reusable assets, and continuously improve.

AI-Enabled Engineering as a product loop (problem → KPI → workflow → assets → scale).

Where AI Productivity Actually Comes From: Context + Workflow, Not just Code Gen

The most successful implementations concentrate on removing friction across the whole lifecycle, not just generating code. Atlassian’s DevEx research argues developers spend a minority of their time coding and can still lose 10+ hours/week to inefficiencies like finding information and tool context switching.

At Gorilla Logic, that translates into a simple operating rule: AI only performs at its best when it has the right context. We prioritize high-signal sources that reflect “how the work actually happens”, the codebase and repository history (PRs, commits, tests), architecture decision records and technical docs, and the system of record for delivery (backlog items, acceptance criteria, QA artifacts, incidents/runbooks). Then we use retrieval and workflow integration so the model is grounded in those artifacts at the moment it’s needed, rather than relying on generic prompts.

That aligns with a core implementation rule: invest in context, retrieval, and workflow integration. In Stack Overflow’s developer survey analysis, the most-cited challenge is that AI tools lack codebase/internal architecture/company context.

McKinsey’s developer lab findings echo this: off-the-shelf tools don’t know project and organizational needs; developers must supply context and leaders must provide training, use-case selection, and risk controls.

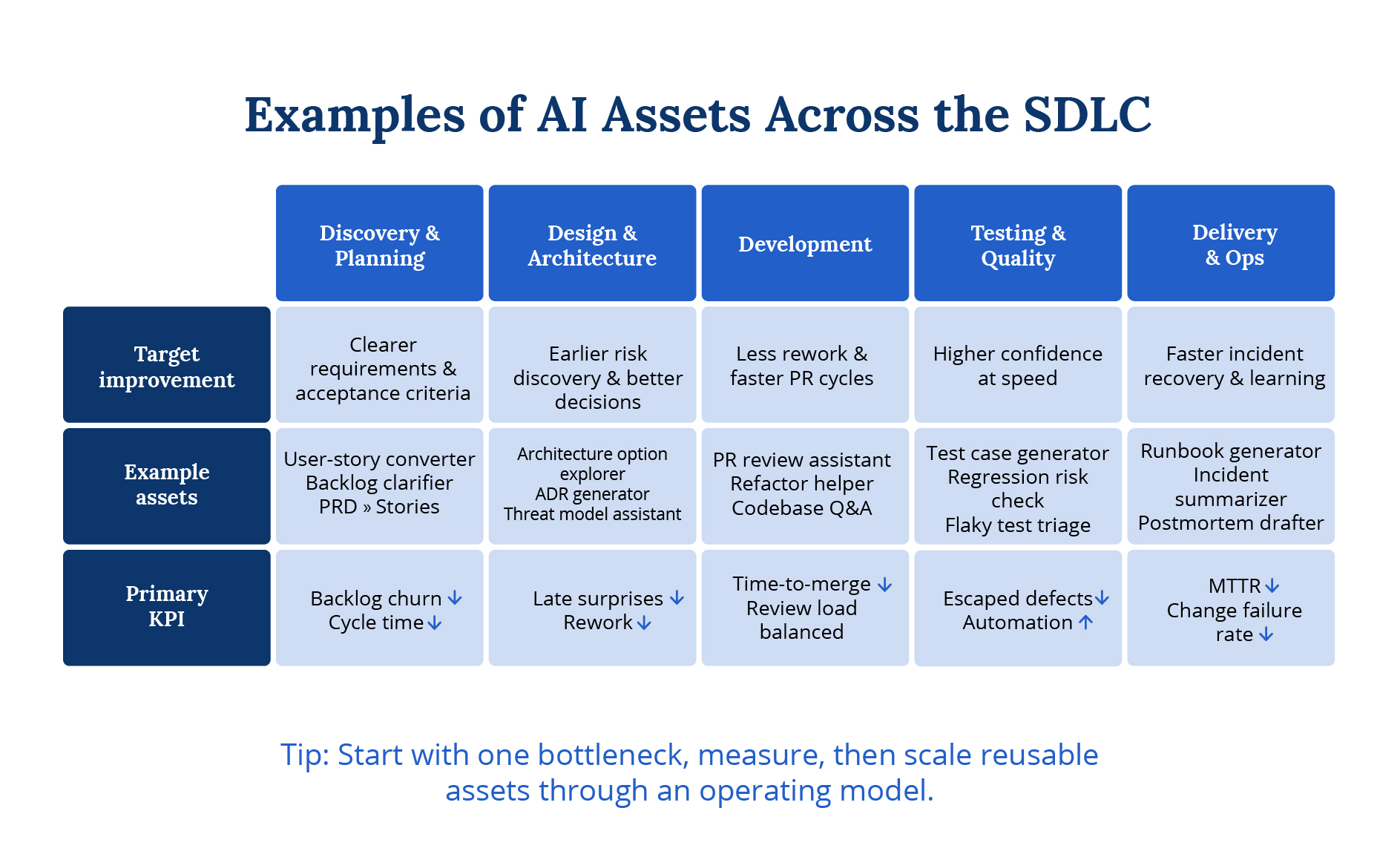

Gorilla Logic Catalog: Targeted Reusable AI Assets Across the SDLC

Creating the Gorilla Logic Catalog turns isolated prompts into repeatable value. Below are examples of small, but targeted improvements that are logged into the Gorilla Logic Catalog and can be reused.

From Theory to Practice: AI Assets That Drive SDLC Efficiency.

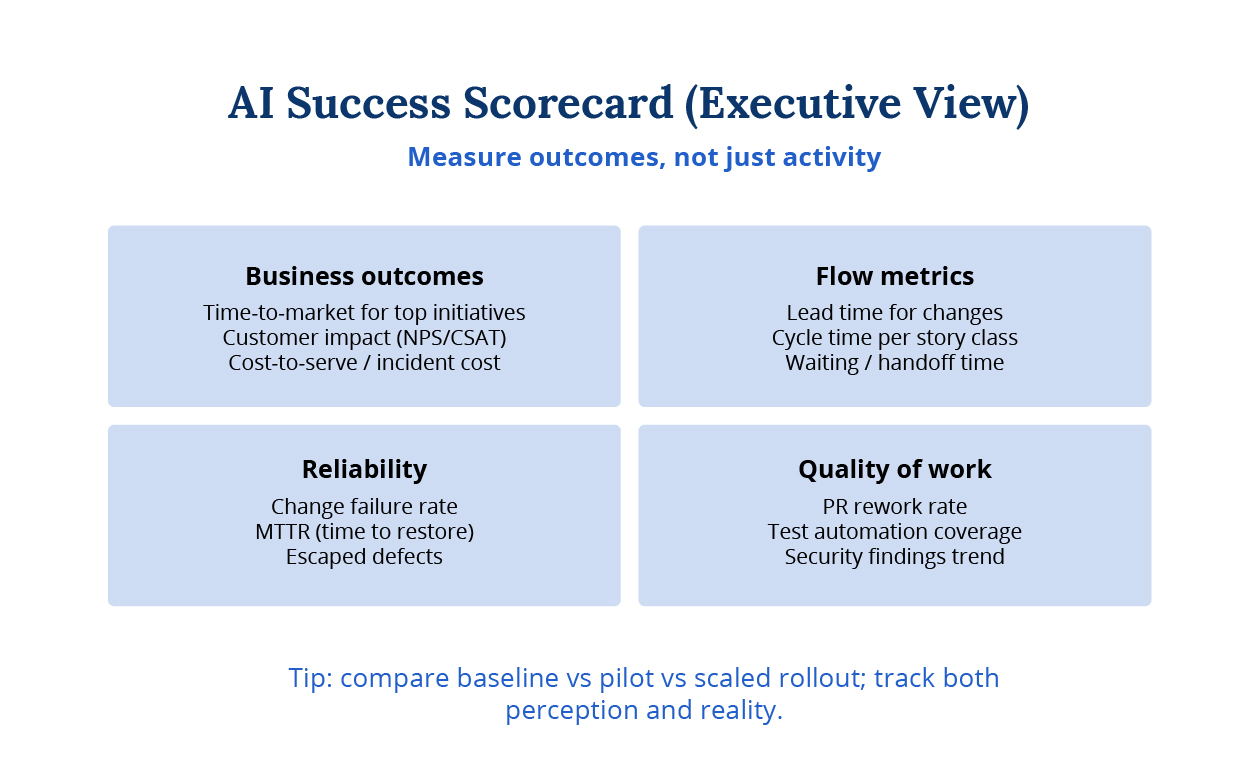

The Executive’s Framework for Quantifying AI Impact, or Beyond Vanity Metrics: A Scorecard for Real AI-Enabled Engineering ROI

To earn trust at the C-level, measurement must connect AI to outcomes, which means achieving success and providing ROI to investments. We recommend a scorecard that blends business outcomes, flow, reliability, and quality, using widely adopted delivery metrics (e.g., DORA-style measures) as part of the picture.

AI success scorecard: outcomes + flow + reliability + quality.

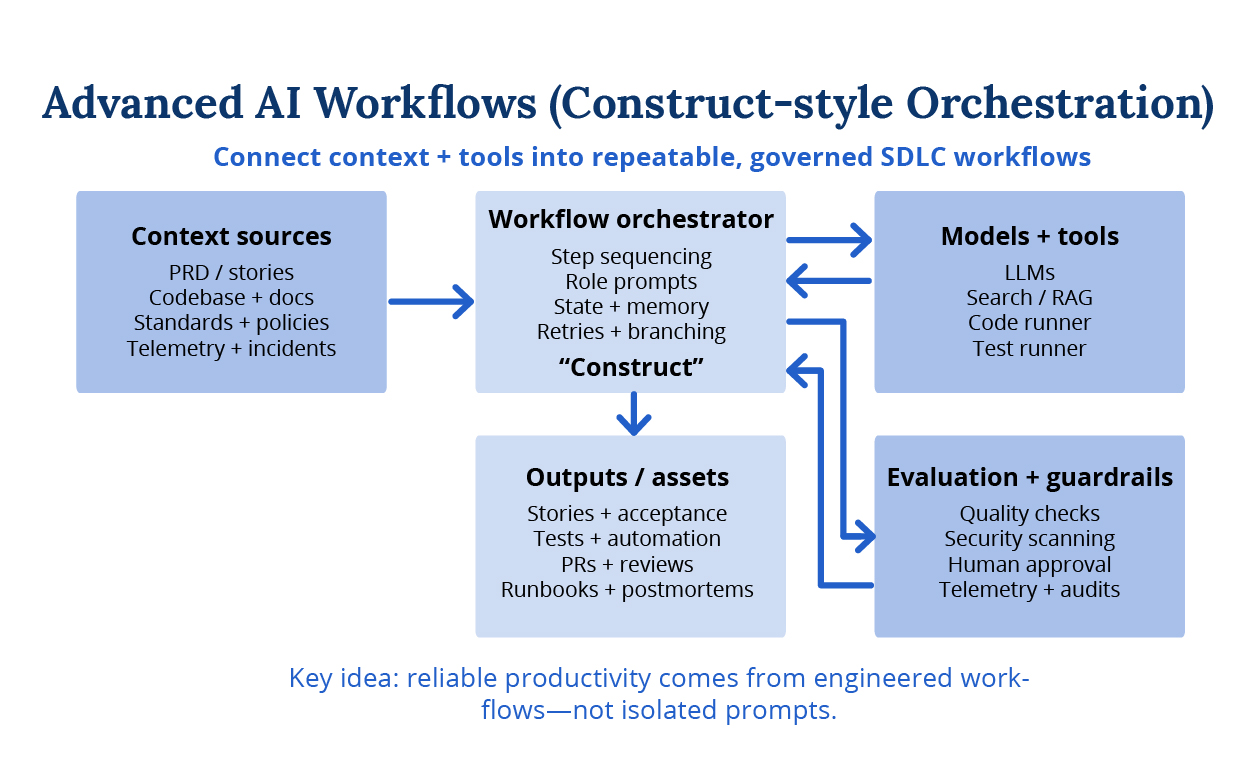

The Next Level Engineered Workflows (Gorilla Logic Construct™)

The next level is orchestrating multiple AI steps into governed workflows, where context is injected deliberately, outputs are evaluated, and human approval is built into the system. This is how we move and engineer from opportunistic usage to an AI-Enabled SDLC.

Gorilla Logic Construct™ orchestration: connect context + tools + guardrails into repeatable workflows.

A Gorilla Logic Success Story: Time-to-Value Cut From 7 Days to 4 Days by Engineering AI Across the SDLC

In a highly regulated medical-industry engagement, traceability isn’t optional; a Global Pharma company looked to Gorilla Logic to lead them in implementing AI to their software engineering and product teams. Every change needed predictable downstream artifacts, acceptance criteria, test cases, execution steps, and defect reporting, without slowing delivery.

Baseline (one Gorilla Logic Pod): 10 engineers shipping ~6 PRs per sprint. The team had strong test coverage, yet still saw ~8 bugs per sprint. The real constraint was flow: merge approvals commonly took 2-3 days, and overall Time-to-Value (ready-to-build → merged → released → verified) averaged ~7 days.

We started with targeted, easy-to-measure AI assets:

• AI Test Case Generator (Zephyr format): standardized test artifacts and saved ~24 hours/month/QA engineer.

• AI QA Bug Reporter: improved defect consistency and accelerated triage ~16 hours/month/QA Engineer

These were meaningful local wins. The system-level gain arrived when we engineered the end-to-end chain so every step produced a clean, structured output that became a reliable input for the next step, while keeping humans in the loop at high-risk points.

Backlog Clarifier → Acceptance Criteria → Testing Steps → Zephyr Test Cases → Bug Reporter

We treated each step like a product interface: explicit inputs/outputs, domain context (standards, glossary, examples), and human approval gates. This prevented one bad step from cascading into the next step’s input.

Result: Time-to-Value dropped from ~7 days to ~4–5 days (a ~29%–43% improvement). We summarize this as “25%+ faster delivery” at the program level to stay conservative across scope mix and quality gates. The rollout took ~4 months, iterating step-by-step with clear KPIs.

| Segment | Baseline (days) | After (days) |

| Ready-to-start / queue | 0.5 | 0.5 |

| Build (coding + PR prep) | 2.0 | 1.5 |

| Review + approvals | 3.0 | 1.0 |

| Release lead time | 1.0 | 0.5 |

| Verification | 0.5 | 0.5 |

| Total Time-to-Value | 7.0 | ~4.0 |

Gorilla Logic Delivery Effectiveness in ROI Terms

A 25% improvement in Time-to-Value translates directly into ROI because delivery capacity is one of the biggest levers on your engineering investment. If the same team can deliver validated outcomes 25% faster, you’re effectively getting 25% more throughput per dollar. In practical terms, if a Pod costs $X per month, a sustained 25% gain implies an additional 0.25× worth of delivery capacity unlocked each month (before even counting second-order benefits like fewer handoffs, less rework, and faster learning cycles).

ROI is only “real” when the speedup is paired with guardrails (quality/reliability) so you’re not just moving faster, you’re moving faster safely.

AI-Enabled Engineering: A practical 30-60-90 day starting plan

- Days 0–30: Select one bottleneck; baseline current performance; train the team on tools, prompting, and risk patterns.

- Days 31–60: Run an AI Circle pilot; engineer a workflow with explicit inputs/outputs; measure impact with agreed KPIs.

- Days 61–90: Turn the winning workflow into an asset; document guardrails; expand to the next bottleneck and repeat.

Conclusion: AI That Executives Can Trust Is Engineered

AI-Enabled Engineering succeeds when leaders treat it as a systematic approach to delivery effectiveness, not a technology rollout. The organizations pulling ahead aren’t debating which copilot to buy, they’re identifying where work stalls, defining what improvement actually means, and engineering workflows that turn faster execution into measurable business outcomes. This is the CEO product-thinking lens at work: Problem → KPI → Workflow → Assets → Scale.

The metric that matters most is Time-to-Value: how fast your teams convert a prioritized idea into validated capability in production. When you systematically reduce Time-to-Value, you’re forced to confront the actual friction points: unclear requirements, approval delays, context gaps, manual verification, and unnecessary handoffs. You build executive confidence not through “AI-generated lines of code” but by pairing faster flow with demonstrable improvements in reliability and quality.

At Gorilla Logic, we scale this through Learn → Practice → Scale. We establish shared AI literacy, validate targeted improvements against real delivery constraints, and capture proven patterns in a governed catalog so gains compound rather than fade. The advanced play is Gorilla Logic Construct™ orchestration: structuring workflows with deliberate context injection, validated inputs and outputs, automated evaluation, and human checkpoints, so improvements cascade forward instead of creating downstream chaos.

If you’re leading engineering, product, or technology, your first move is concrete:

- Identify one delivery bottleneck where work predictably slows or breaks

- Set one primary KPI (Time-to-Value) backed by 2–3 quality and reliability guardrails

- Engineer the end-to-end workflow with structured handoffs and human approval gates

- Capture it as a reusable asset and apply the pattern to the next constraint

AI becomes a real advantage when it stops being something your developers tinker with in their spare time and becomes the way your whole organization delivers. That’s when the investment pays off (and keeps paying off).

Related Content

What It Really Takes to Embed AI Across the SDLC

From ROI to Return on Autonomy: How Agentic AI Reshapes the Enterprise

How an AI-Enabled Engineering Pod Accelerated a Medical Affairs Platform