There’s a conversation happening behind closed doors at a lot of companies right now, and it goes something like this: AI coding assistants are deployed, adoption is high, developers seem genuinely faster — and yet the product is shipping at roughly the same cadence it always has.

Boards are asking about AI productivity gains. Private equity firms are modeling them. CEOs are demanding them. And engineering leaders are quietly trying to square those expectations with a reality that doesn’t quite match the headlines.

Something is getting lost between the tool and the outcome. The question is what.

The Wrong Scoreboard

The most common mistake organizations are making is measuring AI adoption instead of AI outcomes. They’ve rolled out the tools, encouraged experimentation, tracked license utilization — and concluded that because developers are using AI, the organization is getting more productive.

But software delivery isn’t a collection of isolated tasks. It’s a system — requirements, backlog refinement, architecture, coding, QA, security review, deployment, observability, incident response. Accelerating one part of that system doesn’t automatically accelerate the whole thing. It’s the difference between adding a lane to one stretch of highway and actually fixing the traffic.

Most organizations have added the lane in the middle and left everything else untouched.

The “Hourglass” Problem in Modern SDLCs

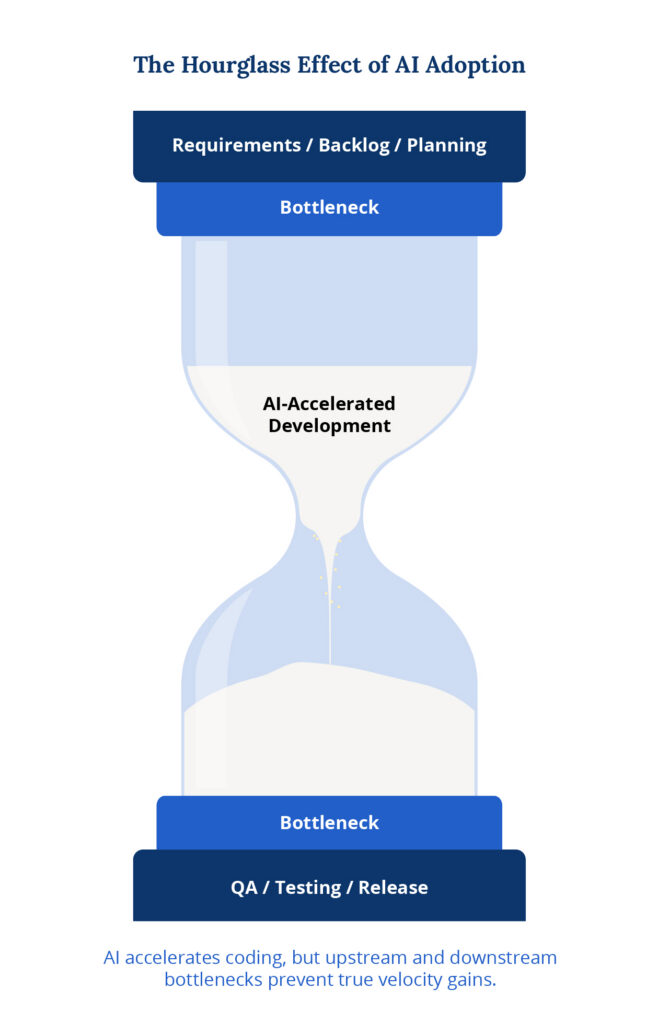

There’s a pattern that shows up consistently across engineering organizations that have leaned into AI: what you might call an hourglass-shaped development cycle.

AI is being applied heavily in the development phase — the narrow middle of the process. But the work still piles up on either side. Upstream, requirements come in vague or incomplete. Backlog refinement is slow. Context gets lost between business stakeholders and engineering teams. Downstream, QA is still largely manual. Regression testing creates delays. Release processes involve friction that no coding assistant can touch.

Code gets written faster in the middle.

But it gets stuck at the top and bottom.

When product ships at roughly the same cadence despite all the AI investment, executive confidence starts to erode — and understandably so. The gains are real, they’re just in the wrong place.

Developer Productivity and Organizational Velocity Are Not the Same Thing

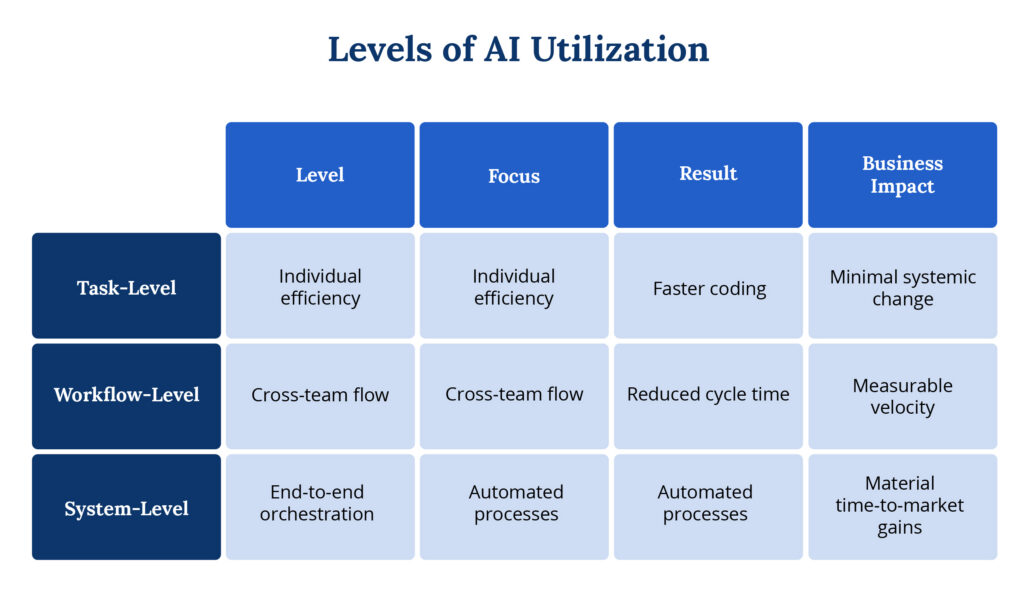

One of the biggest misconceptions in the AI conversation is equating developer efficiency with organizational velocity.

A developer who writes code faster is genuinely more productive. But enterprise product velocity is measured in lead time to production, cycle time, defect escape rate, deployment frequency, and mean time to resolve incidents. If those numbers don’t move, the business doesn’t move — regardless of what’s happening at the individual contributor level.

The organizations that are seeing significant, measurable improvements aren’t just equipping developers with better tools. They’ve built a measurement framework first, so they know where the real drag is. Then they’ve redesigned how work flows across teams, not just within them. Automating a pull request review helps. Automating how QA cycles integrate with deployment pipelines helps a lot more.

The distinction matters because isolated improvements don’t compound. You need the handoffs.

Why Most AI Initiatives Stall

There are a few consistent reasons organizations get stuck in the “high adoption, low impact” zone.

The first is deploying tools before defining the problem. Without a clear view of where the system constraints actually are, AI just accelerates the wrong things. If your biggest bottleneck is slow requirements definition, a better code completion tool won’t help you.

The second is treating AI as task automation rather than workflow redesign. Generating commit messages, accelerating test creation, cleaning up pull requests — all of it is useful, none of it is transformative on its own. The gains that actually show up in business metrics come from rethinking how work moves across product management, engineering, QA, and DevOps together.

The third, and most underestimated, is organizational change. The companies reporting real productivity gains haven’t been experimenting for a few weeks. They’ve been evolving their approach over months, building structured training programs, defining standards, aligning leadership, and creating governance models that let AI scale without chaos. Productivity compounds — but only if you start early and move deliberately.

What Actually Drives 50–70% AI Productivity Gains

When organizations do see significant productivity improvements, it’s because they’ve taken a system-wide approach.

The pattern typically looks like this:

- Equip individuals with AI tools to improve task efficiency.

- Implement measurement frameworks to track velocity and quality.

- Redesign workflows across the SDLC.

- Introduce automation at the orchestration level — not just the task level.

The biggest gains don’t come from writing code faster.

They come from eliminating handoffs, reducing rework, automating QA cycles, accelerating incident response, and streamlining deployment processes.

When AI operates across workflows — not just within them — throughput changes.

That’s when velocity improves materially.

What to Do Now

If you are leading an engineering organization navigating this shift, three priorities stand out.

1. Evaluate your requirements process before optimizing your build process.

Faster AI output built on weak requirements accelerates the wrong outcomes. A growing set of AI tools can support this work: Atlassian Intelligence helps teams draft user stories and break down epics directly inside Jira and Confluence; general-purpose models like ChatGPT, Claude, and Gemini are effective for brainstorming, edge case identification, and gap analysis; Azure DevOps with Azure OpenAI can surface missing or conflicting requirements by processing documents and stakeholder inputs early in the cycle; and Notion AI helps teams query and organize structured requirements across projects.

These tools not only reduce ambiguity and accelerate elicitation — they amplify the quality of the human process behind them.

2. Treat QA capacity as a delivery constraint, not a downstream cost center.

If your testing function cannot absorb increased build velocity, your effective throughput has not improved — it has shifted. Headcount, tooling, and test automation infrastructure all need to keep pace.

A growing set of AI-assisted QA tools can support this work: Testim and Mabl use machine learning to generate, maintain, and self-heal automated test suites as codebases evolve; GitHub Copilot can accelerate the authoring of unit and integration tests directly alongside application code; Applitools applies visual AI to detect UI regressions that traditional assertions routinely miss; and general-purpose models like ChatGPT, Claude, and Gemini are effective for generating test cases, identifying edge cases, and drafting acceptance criteria from existing requirements.

These tools not only reduce the manual burden on QA teams — they amplify the coverage and consistency of the human judgment behind them.

3. Document what your AI cannot know.

Edge cases, business rules, customer history, and architectural rationale need to exist somewhere outside someone’s memory. This documentation becomes the context that separates AI-assisted teams that ship confidently from those that ship fast and fix often.

The Clock Is Moving Faster Than Most Realize

Here’s the uncomfortable part. If your organization is still in the experimentation phase — running pilots, encouraging individual adoption, waiting to see what sticks — you’re probably not a few weeks behind the curve. You may be six to twelve months behind it.

That’s because productivity gains from AI don’t come from installing a tool. They come from redesigning how work gets done around it. And that redesign takes time — time to build measurement frameworks, time to retrain teams, time to untangle the processes that have calcified over years. The organizations reporting real gains started that work earlier than it felt necessary, and moved more deliberately than felt urgent.

AI is genuinely changing the economics of software development. It’s lowering the cost of iteration. It’s making legacy modernization more feasible. It’s compressing feedback loops that used to take days into something closer to hours. But those advantages don’t accrue automatically. They accrue to the organizations that treat AI as an operational challenge, not just a tooling decision.

The question worth asking in your next leadership meeting isn’t “are our developers using AI?” It’s “has our product velocity measurably improved?” If the answer is unclear, the strategy probably needs another look.

The hourglass has two bottlenecks. Both of them need attention.

This article is based on a recent conversation between Drew Naukam, our CEO, and Bob Graham, our Chief Growth Officer. You can watch the full discussion here.